Improve the quality of your solution from a DevOps perspective

Developing ETL or ELT solutions in smaller or larger teams has never been easier with the help of DevOps. It enables us to be certain that the solution still works after multiple team members made changes in different parts of the solution. For example changes in database stored procedures, tables, views, etc. combined with Azure Data Factory pipelines and changes in the setup of your deployment pipeline (CI/CD).

DevOps teaches us to automate as much as possible, to create repeatability of the process. Working with source control and frequent check-ins of code. Implementing Continuous Integration and Continuous Development (CI/CD) will help us to deliver fast and reliable without manual dependencies.

Together with a small team, we are working on a data platform ‘solution accelerator’. This accelerator enables us to easily deploy a working end-to-end solution including Azure SQL Database, Azure Data Factory, and Azure Key Vault.

We are always improving and adding new features to our solution. As a product owner, I want to make sure my product isn’t broken by these additions. A good branching strategy aside, automating as many tests as we can, helps us achieve this.

The latest improvement to our deployment pipeline is to trigger an Azure Data Factory (ADF) pipeline from our deployment pipeline and monitor the outcome. In this case, the result determines if the pull-request is allowed to be completed and therefore decreases the chance of resulting in a ‘broken’ main-branch.

In this article, I will give an overview of the steps in our build and release pipeline and provide the scripts for triggering and monitoring the ADF pipeline.

Please note the difference between pipelines. I'm talking about Azure Data Factory pipelines whose main task is the movement of data. And on the other hand CI/CD or deployment pipelines. These are used to deploy resources to our Azure subscription. And live in our Azure DevOps Project.Please note that there are many kinds of tests that you can perform. In this example we are merely checking the if ADF pipeline 'is doing things right'. In contradiction to 'is it doing the right things'!Azure DevOps pipeline setup

The setup we are currently using has, among others, the following elements:

- GitHub repository

- Azure Resource Management Templates (ARM Templates)

- Visual Studio Database project

- Azure Data Factory pipelines in JSON format

- Azure DevOps project

- Azure Pipelines in YAML

- Build and Validation stage

- Release stages

- Azure Pipelines in YAML

I strongly advise using YAML syntax to define your pipelines instead of the classic editor. The biggest advantage being able to version control your pipeline with the corresponding version of your code. See the references for the documentation.

Pipeline setup – Build and Validation stage

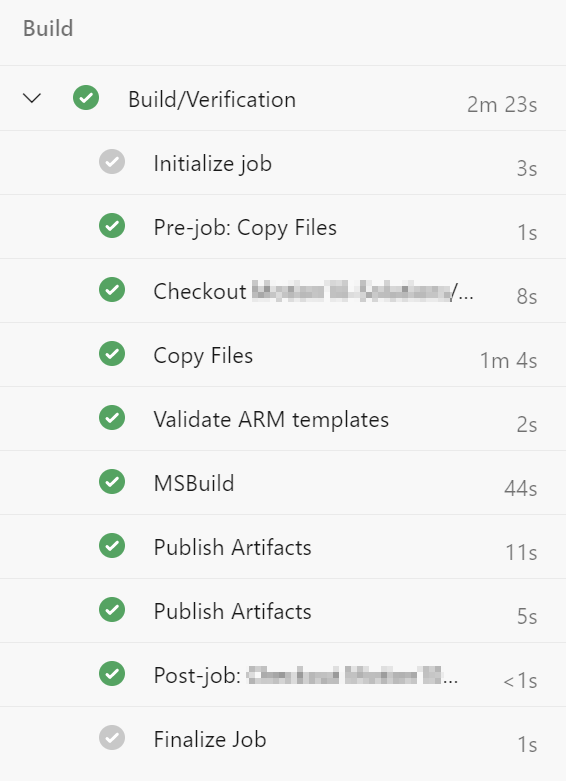

The pipeline has two different kinds of stages: A ‘Build and Validation’ stage and multiple ‘Release’ stages.

The ‘Build and Validation’ stage has two main objectives:

- validating the ARM Templates

- building the database project

The results of these tasks are published as artifacts to be used in the release stages.

Pipeline setup – Release stage

The ‘Release’ stages have the task to deploy all resources in the following order:

- deployment of ARM Templates

- deployment of ADF objects (pipelines, datasets, etc.)*

- deployment of SQL Database project

*Tip Deploying Azure Data Factory: We had many discussions about the topic, but in the end chose to use an extension build by Kamil Nowinski in stead of the Microsoft approach. The are many quality of life improvements in this extension!Pipeline setup – Release stage Triggers

The release stage of the deployment pipeline is repeated for the different environments we defined. In our situation, we are using development, test, and production. The triggers for these environments are a result of the git branching strategy we chose.

It is in this release stage, to the ‘test’ environment, that we implemented a PowerShell task that triggers and monitors an ADF pipeline run. The release to the test environment is triggered when a pull-request in the GitHub repository is initiated.

Trigger and Monitor ADF Pipeline Run

There are multiple ways to trigger a pipeline other than the ADF User Interface. Here we use PowerShell because it is easily incorporated into the deployment pipeline. Below is an example of an Azure PowerShell task that executes a script from your repository.

The Microsoft documentation offers a great starting point for the PowerShell script. After combining the statements for triggering and monitoring I made two important modifications:

- the script would break if the ADF pipeline would get status ‘queued’

- if the outcome does not equal ‘succeeded’ we throw an error

Throwing the error on line 27 ensures the task in the release stage fails and therefore the whole pipeline gets the status ‘Failed’. This is important because this prevents the pull request from completing before this error is resolved in another commit to the repository.

That’s it! Please share any tips on even more improvements!

References

Azure Pipelines | Microsoft Docs

Azure Pipelines – Azure PowerShell task | Microsoft Docs

Azure Data Factory – PowerShell | Microsoft Docs

Azure Data Factory – Continuous integration and delivery in Azure Data Factory | Microsoft Docs

I would suggest changing the sentence “the script would break if the ADF pipeline would get status ‘queued’” to “the script would break if the ADF pipeline would get status ‘queued’. I fixed that.”

LikeLike